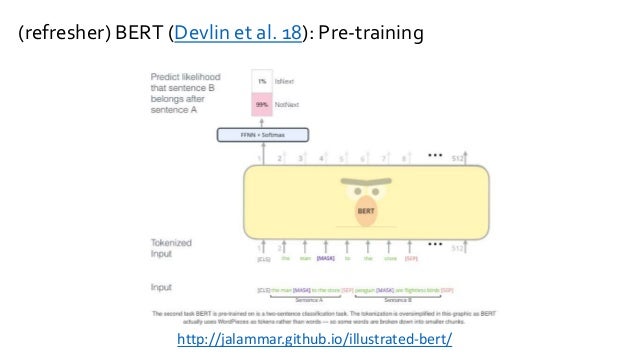

11.Liu, L., et al.: Empower sequence labeling with task-aware neural language model.10.Lin, Z., et al.: A structured self-attentive sentence embedding.In: International Conference on Machine Learning, pp. 9.Le, Q., Mikolov, T.: Distributed representations of sentences and documents.In: Advances in Neural Information Processing Systems, pp. 8.Kiros, R., et al.: Skip-thought vectors.7.Howard, J., Ruder, S.: Universal language model fine-tuning for text classification.6.Devlin, J., Chang, M.W., Lee, K., Toutanova, K.: BERT: pre-training of deep bidirectional transformers for language understanding.5.Dai, A.M., Le, Q.V.: Semi-supervised sequence learning.

4.Conneau, A., Kiela, D., Schwenk, H., Barrault, L., Bordes, A.: Supervised learning of universal sentence representations from natural language inference data.In: Proceedings of the 25th International Conference on Machine Learning, pp. 3.Collobert, R., Weston, J.: A unified architecture for natural language processing: deep neural networks with multitask learning.2.Chen, Z., Badrinarayanan, V., Lee, C.Y., Rabinovich, A.: GradNorm: gradient normalization for adaptive loss balancing in deep multitask networks.In: Proceedings of the Tenth International Conference on Machine Learning (1993) Google Scholar 1.Caruana, R.: Multitask learning: a knowledge-based source of inductive bias.